We tend to entrust our infrastructure in the cloud to the titans of the industry, relying on their tried-and-tested expertise, resources, resilience and capacity. After all, they enable our cloud solutions to take advantage of superior scalability, high availability and performance. On top of that, the costs of cloud computing services are perfectly reasonable, especially when compared to a wholly bespoke datacenter setup – or are they? Look closer, and there are certainly cases when a developer’s error or a poorly-designed architecture will ultimately result in exorbitant cloud hosting costs. So in order to better understand this concept, let’s go over three case studies of cloud computing.

Cloud Infrastructure Costs Gone Wild: GCP and Cloud BigQuery

First, in one of our recent projects, we helped our client to run the cloud-based infrastructure of their entirely automated, real-time SEO platform. The solution rested in the safe familiarity of Google’s popular cloud-based data centres (i.e. Google Cloud Platform), whilst also making use of BigQuery — a serverless, multi-cloud data warehouse.

In BigQuery, reading requests to the BigQuery database are chargeable. On-demand queries are charged based on the number of bytes read, while the Google cloud service charges $5 per TB of data — though it also provides 1TB a month for free, which on balance doesn’t sound too bad, does it?

When a solution is poorly designed, however, the suboptimal amount of SELECT requests to the database mean BigQuery can become a money burner 🔥.

This is exactly what we found out when we examined how the solution was gaining access to the ClickStream data.

Due to the sheer volume of data, the solution was forced to send tens of thousands of SELECT requests per hour. In fact, for every website using our client’s SEO solution, every visit to one of its pages had to also be recorded in the database. Before doing so, the solution also made additional checks, which required sending a SELECT request. So for websites with a high amount of daily visitors, the number of such requests was unavoidably in the hundreds of thousands.

While the client had $100,000 in free credit available — kindly provided by the Google cloud hosting service — his cloud infrastructure costs were such that some of this was used up surprisingly quickly, before developers were able to recognise and fix the problem.

Cloudflare Apps and a Request Chaining Loop

For our second cloud engineering case study, we discovered yet another money trap that in effect was a total gridlock for the customer mentioned above. Because their platform was tightly coupled with Cloudflare’s features, they also utilised Cloudflare Apps. One of these custom applications in particular — let’s call it the AppLogger — was being used to gather statistics about every request that was sent to a website.

The AppLogger had to be installed within Cloudflare for any website one wanted to track. It would then pass on every incoming request to the website to a separate tracking platform.

At some point, however, developers decided to install the AppLogger on the domain of the tracking application. After all, who wouldn’t want to pore over some interesting statistics about themselves? Unfortunately, however, this created an impossible situation in which the logger would register every incoming request to the platform, then report this fact to the platform, which then generated a new incoming request to it, which it therefore also reported… around the merry-go-round forever.

Though initially triggered by a single request, the situation quickly evolved into a death spiral:

- The amount of requests to Cloudflare was growing rapidly (with rattling coins, as they were pouring out of the budget sack)

- The amount of requests to the API of the tracking platform was also rapidly expanding (which tore an even bigger hole into the budget). And finally…

- After some time, the unending request chaining loop was also making the API unresponsive.

In our own case, this was crashing Nginx completely.

As icing on the cake, not only did every one of these requests contain a URL encoded as base64, but with every new iteration, the length of requests was also expanding. This resulted in:

- seoplatform.domain/post?requestURLInBase64

- seoplatform.domain/post?requestURLInBase64requestURLInBase64

- seoplatform.domain/post?requestURLInBase64requestURLInBase64requestURLInBase64

- seoplatform.domain/post?requestURLInBase64requestURLInBase64requestURLInBase64requestURLInBase64

…

Had the self-sent requests been better handled, this could have all been easily avoided. Nevertheless, by adding a few simple validations, we were able to rectify the matter.

Lost in Translation — AWS Translate Costs Skyrocket

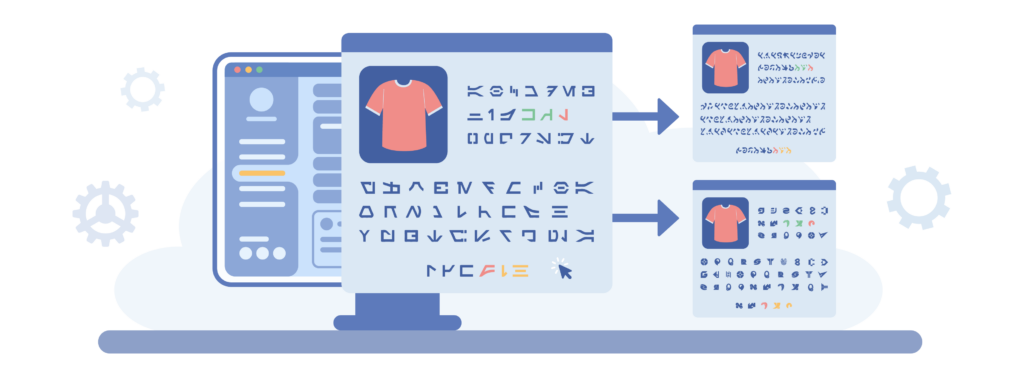

For our final case study of cloud computing, our customer was a European fashion eCommerce startup who had built a data aggregation platform that provided users with an AI-assisted shopping experience.

Chasing an ambitious deadline, the customer had requested to enlarge their cloud infrastructure service team as a matter of urgency. However, because this request came in at extremely short notice, it was impossible to do so within the desired timeframe. As a result, believing there was no time to waste, our customer brought in a third-party freelancer that was to help to develop the project. The newcomer’s mission was to both assist the main team and to handle the implementation of a multi-language support feature — which had already been promised to prospective investors. The customer thus tasked the developer with the implementation of a separate service, which should receive product descriptions and translate them into English with the help of the AWS Translate service. Here, the only thing the main team had to worry about was to coordinate the future integration of the module. The risks of the new hire’s unfamiliarity with the project codebase were duly noted and accepted, and work began shortly thereafter.

With things finally set in motion, though the work had officially begun, the results themselves were not looking very promising. The freelancer constantly approached the team with additional requests for help, which unavoidably impacted the delivery timeline of their own responsibilities in the project. In fact, time seemed to be moving faster by the minute. The deadline was already on the horizon, and yet work on the new module was now questionable at best.

Understandably, the disgruntled customer eventually suspended the freelancer’s position and asked the main team to finish his job. Moreover, in order to balance out the increased demand and lack of capacity, the list of requirements was also reduced. Though integrating this module was more challenging than initially expected, in the end it was nonetheless accomplished. Soon, automatic translations were finally enabled and the application supported multiple languages for both product names and descriptions.

Cloud Infrastructure Costs: a Rude Awakening

A few months later, our project manager received a wake-up call from the customer, who had just been confronted with his latest bill for the aforementioned AWS Translate service. The invoice in question was much higher than the normal AWS pricing — seven times higher than any one of its previous iterations — and the amount was completely unaffordable. Stripping away the richness of his colourful vocabulary, in essence, the customer had two questions for us: “what the *bleep* is going on?” and “how the *expletive* do we stop it?”

The immediate thing to do was to halt all requests to the AWS Translate service. In practice, to keep the AWS service pricing at acceptable levels, this meant making sure that none of our environments — be they test or production servers — were sending it any more requests. The next step was to seek to understand what exactly had happened and why.

We knew we had to talk to the AWS Translate Support Team, but in order to do this, we would also require some data. So we gathered all the relevant information — including the description of the module’s working logic and the statistics of the amount of data that it exchanged with AWS Translate, and set out to take care of the problem. The module’s logic was to combine the name of the product and its description into a single line, in order to save the cost of translation. We also used a unique separator in the string, which served as a marker between the end of a logical section, and the start of another.

Armed with this vital information, we provided the support team with everything we could find and eagerly awaited their feedback. In the meantime, dying to get to the bottom of the incident, we carried on with our analysis. In the source code, we narrowed down the issue to an additional piece of functionality which kept re-trying to send the data for translation every time the previous attempt had failed. Working closely with the AWS Translate support team, we also found that during the translation process, AWS Translate converted the separator. This meant that as our client’s application was given back the translated text, the separator was nowhere to be seen.

This separator had been chosen by the invited freelancer. It originally looked like this: |000| but after conversion, it turned into: /000 |. For a better understanding, here is a textual example of Dutch to English:

Before translation: Grijs broeken|000|Geweldige beschrijving van een broek

After translation: Grey trousers/000|Great description of trousers

Okay, this could presumably lead to an error and cause some trouble when displaying the layout. But what did any of it have to do with the bill?

Well, haste is often said to be the enemy of quality. By trying to cut corners to meet a deadline, thorough testing of the translation functionality was only done on the data provided by the customer. As it turned out, that information was significantly different from the real-world data coming from various retailers. For one, the descriptions were considerably longer:

Product name: Women's Gingham Golf Joggers

Description: If you're looking for the perfect, versatile trousers to add to your wardrobe, look no further than the Dri-FIT UV Victory sweatpants. Hem cuffs, drawstring waistband and checkered design can be used. Easy to combine with a polo for the court or with a casual top for shopping or out with friends. This product is made with at least 50% recycled polyester fibres.

This difference in textual length became another accomplice to the avoidable crime. Upon translation, the system would put the text into a single column in the database — product name + separator + description as an individual paragraph. It would then retrieve the same paragraph and split it up, by making use of a separator.

When the text was relatively short, everything worked without a problem. But as soon as the size of the paragraph had exceeded 255 characters — the actual limit of the DB column — an error promptly occurred, and the translation could no longer be recorded in the database. This triggered a “translation is missing” event in the module. As a result, on its next scheduled run, the module detected that translation for some of the products was “oh sh*t… missing!” and was rightfully swiftly requesting that AWS Translate send it its way. Again. And again. And again… And so the translation module was getting stuck in an infinite loop.

Upon further examination, we discovered that whilst only 25% of product information was successfully being saved, 75% simply queued for translation. This vicious circle of sin could only be broken by stopping the web-application entirely.

As if all of this were not enough, the “lucky” 25% of products were in fact no better off either. Their information could not be properly displayed in other languages as a result of this “spoiled” separator. It was literally getting lost in translation, and the application’s logic was no longer able to split the text into the required fragments. Though once supported by AWS, the “|” separator became deprecated and had to be replaced with a specific tag to state that certain texts should not be translated, e.g. <span translate=”no”></span>.

All of these findings led to changing the logic of the module’s work, and improving the error handling and negotiations with the AWS Translate team to also settle the accumulated “debt”.